Try to buy a single-core laptop today and you’ll have a difficult time even finding one. The leading computer manufacturers offer at least dual-core for base models of their economy lines, even for laptops. Let that sink in. Our days of single core machines, even laptops, are over. Many of the leading mobile products are also at least dual-core with higher end products having even more cores.

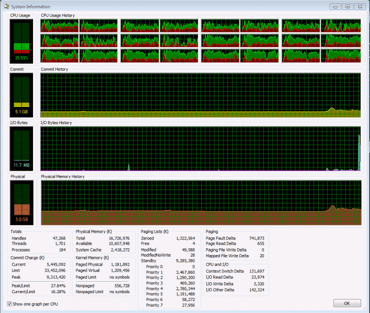

As a developer, it’s exciting to have this hardware available and to know it’ll only get better. It’s even more exciting to be able to use it for development. Perhaps even more enjoyable is to ultimately see your customers using your software to push their multi-core hardware to its limits. If you’ve ever looked at the processor utilization on a multi-core machine and watched as every one of its processor maxed out, chewing through all that work you were throwing at it, you know that feeling of satisfaction. It’s the satisfaction you get from knowing that you’re utilizing the hardware to its fullest.

As a developer, it’s exciting to have this hardware available and to know it’ll only get better. It’s even more exciting to be able to use it for development. Perhaps even more enjoyable is to ultimately see your customers using your software to push their multi-core hardware to its limits. If you’ve ever looked at the processor utilization on a multi-core machine and watched as every one of its processor maxed out, chewing through all that work you were throwing at it, you know that feeling of satisfaction. It’s the satisfaction you get from knowing that you’re utilizing the hardware to its fullest.

However, all the cores in the world aren’t going to just magically make the software you run faster – that software has to support it. 3D InterOp from Spatial has been around for many years and was largely established prior to the multi-core revolution. Therefore, file translations have been inherently sequential. Just because the file translation process has always been sequential doesn’t mean it needs to be. In fact, we are already exploring and implementing multiple strategies for how to catapult 3D InterOp into the multi-core world.

Two distinct high level strategies exist for taking advantage of multi-core machines: multi-threading and multi-processing. Multi-threading is the use of multiple threads on a given processor. Multi-processing is the use of multiple processors on a given computer. Which strategy should be used? Which offers the most bang-for-the-buck? Which is more optimal? Which scales better? These questions can’t be answered without understanding what your code does and how it does it.

Two distinct high level strategies exist for taking advantage of multi-core machines: multi-threading and multi-processing. Multi-threading is the use of multiple threads on a given processor. Multi-processing is the use of multiple processors on a given computer. Which strategy should be used? Which offers the most bang-for-the-buck? Which is more optimal? Which scales better? These questions can’t be answered without understanding what your code does and how it does it.

So let’s dive into the code. First, is it thread-safe? If it is then multi-threading is an option. If not then you have to decide if you’re willing to invest the time and resources necessary to make it thread-safe. If that is not possible then you’re left with the multi-process strategy. Since multi-processing uses separate processes, no memory is shared and therefore thread-safety is a non-issue. Next you’ll need to determine what exactly it is that you want to parallelize. Often this will be a performance bottle neck that you know most of your users encounter. From there you have to analyze the particular algorithms, determine how to split up its work, and begin parallelizing.

The above is a very superficial introduction on how to start parallelizing your application and is by no means complete. Each application will have its own set of complications when it comes to parallelization. There are lots of resources on online to get you started and if you’re even thinking of going down this road, the sooner you start the better.

Please stay tuned for part 2 of this series to be posted after our R24 release.

How are you taking advantage of the multi-core revolution?

Which strategy do you prefer?

What issues and roadblocks have you encountered?

.png?width=450&name=AdobeStock_188705270%20(2).png)

.jpeg?width=450&name=AdobeStock_289023609%20(2).jpeg)

.jpg?width=450&name=Application%20Lifecycle%20Management%20(1).jpg)